Intro

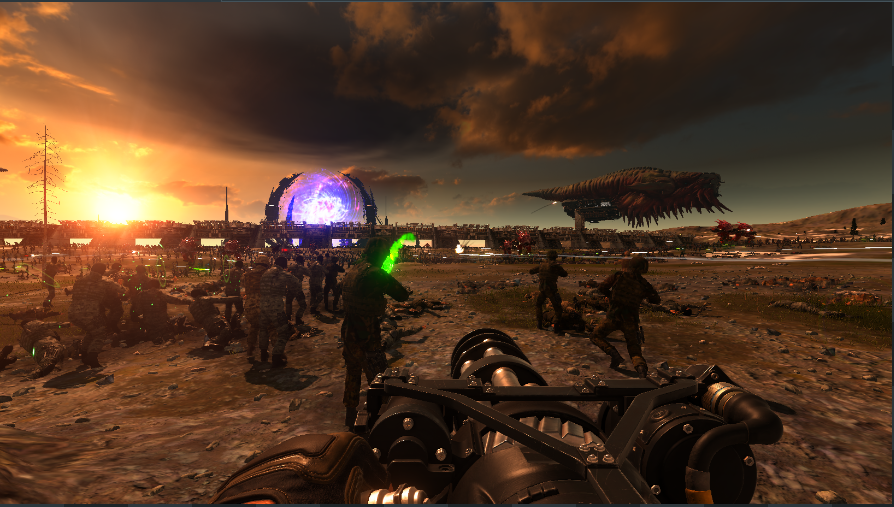

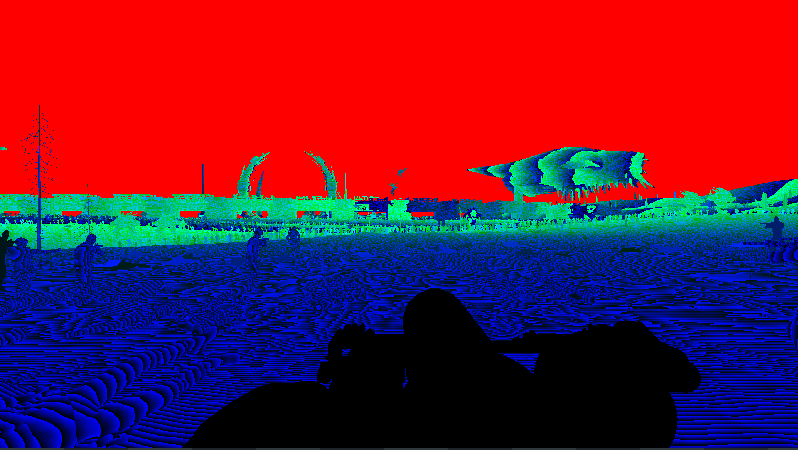

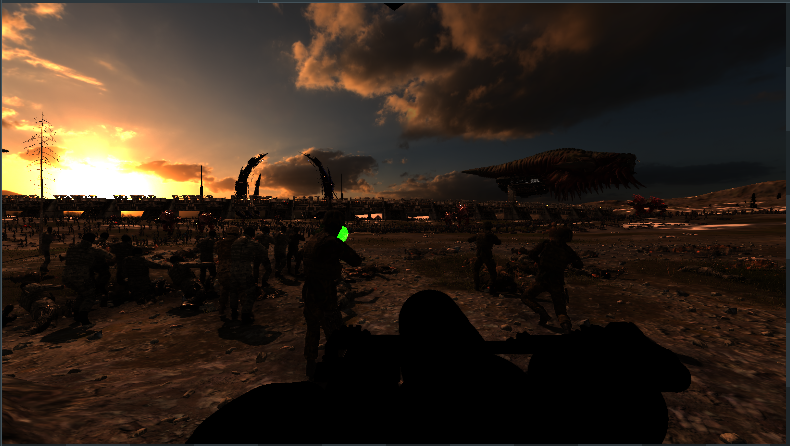

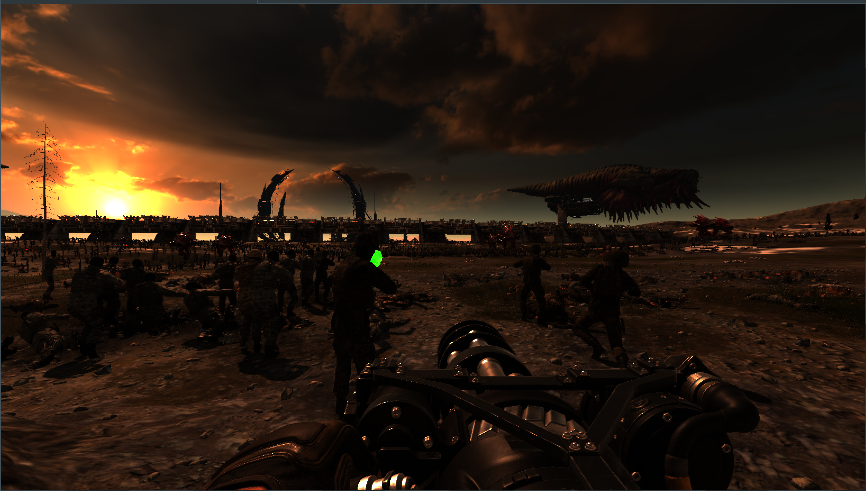

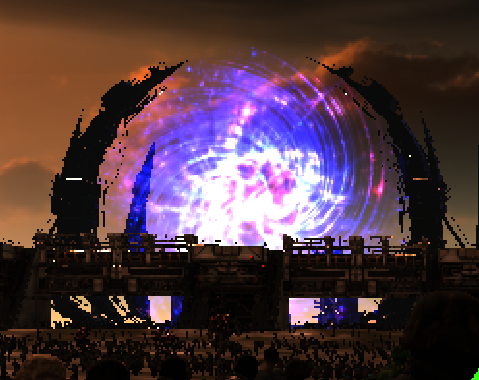

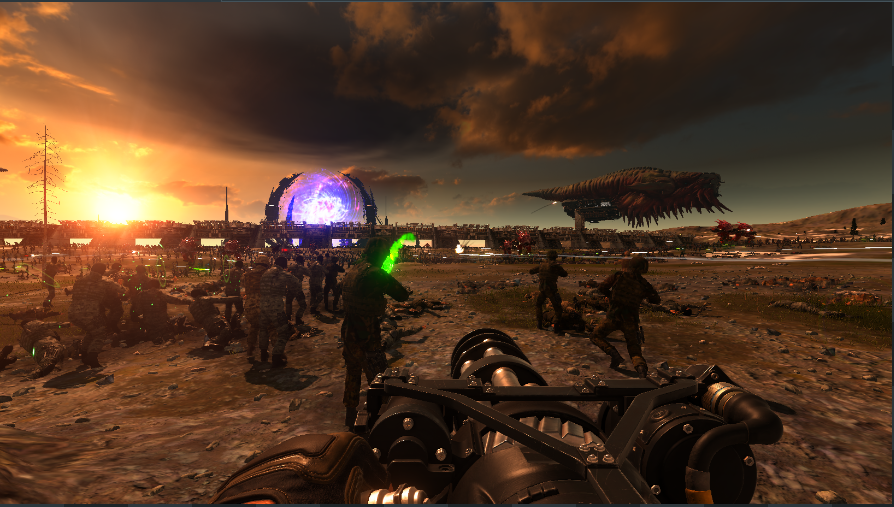

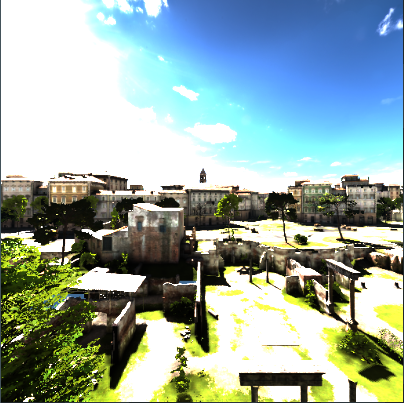

Out of curiosity I used RenderDoc to dig a bit into Serious Sam 4. The first frame we are going to dissect is the following:

Shadow Maps

The game starts by rendering the shadow maps. Only the sun has shadows, 4 cascades are rendered to separate textures, the size goes from 2048 to 5120 depending on the settings.

Whether the terrain is rendered or not also depends on the settings.

Pre pass

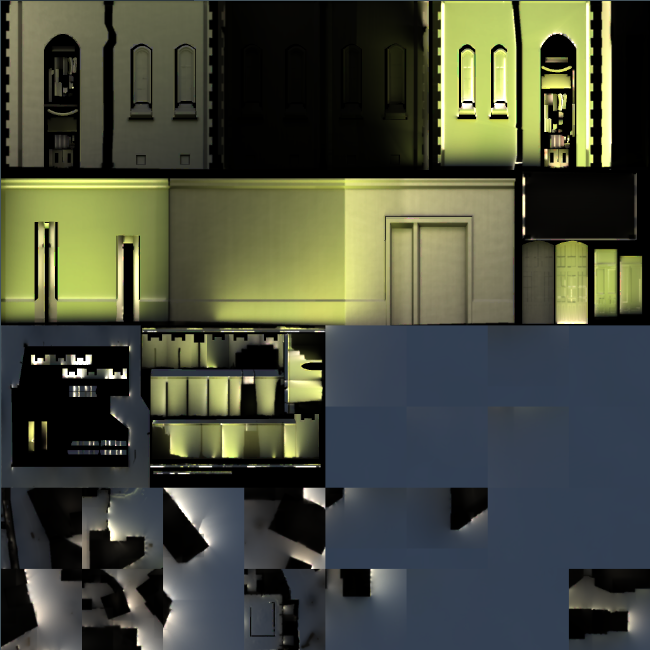

The first pass on the scene has two render targets. After every opaque element of the scene has been drawn, they look like this:

On lower settings one of the buffers looks like this:

So one of them is clearly the depth buffer. I’m not sure about the other one. We will see how the various elements are drawn later.

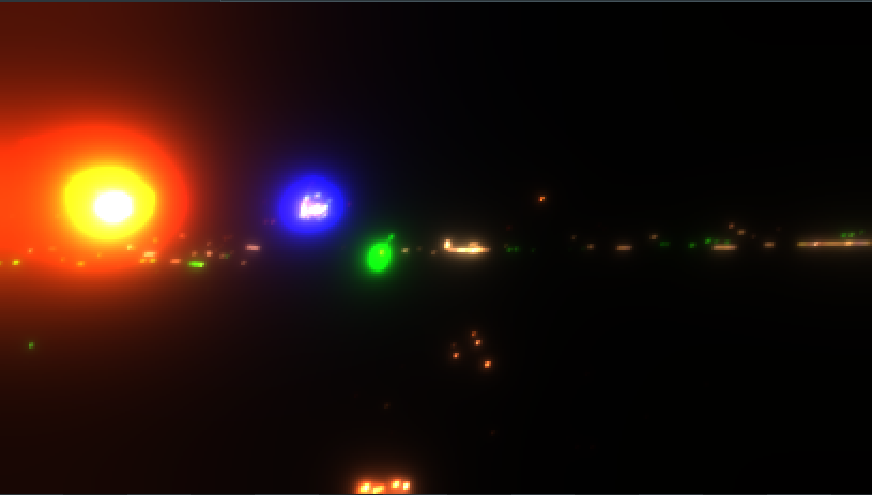

Light pass

For each light in the scene a drawcall is made. The drawcall draws a sphere (made of 960 vertices) around the light, in the pixel the actual light calculation is performed and stored in a RGB16 HDR buffer. The only input to those two buffers are the two buffers generated in the pre pass. Normals are probably reconstructed from depth, materials attributes and normal maps have therefore no way of influencing this pass.

Final image:

Sphere for green light

Main scene pass

During this pass all of the elements from the first pass are redrawn plus transparent objects and particles. Not all of them are that interesting, so let’s just talk about the most interesting ones.

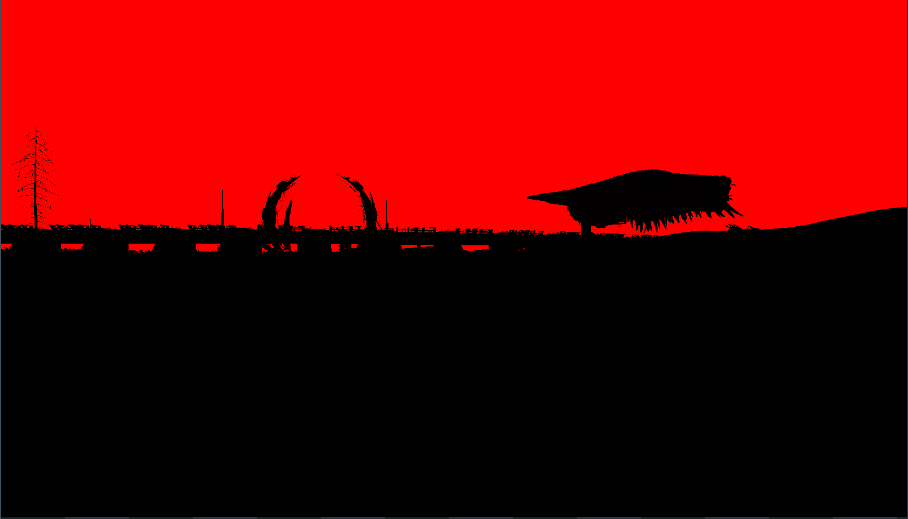

Background bridge

Instanced rendering is used to draw the various sections

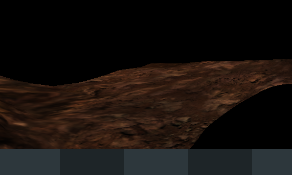

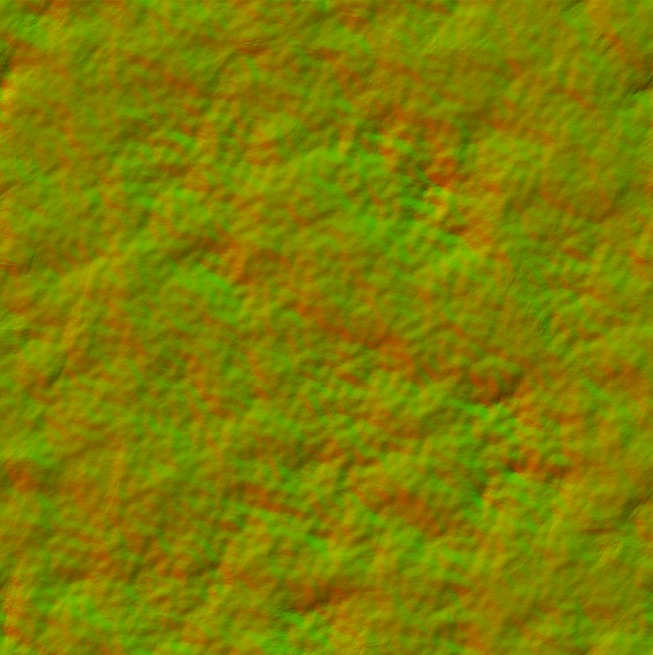

Terrain

These slides go into some detail on how the terrain system works. In any case, the terrain is drawn in chunks:

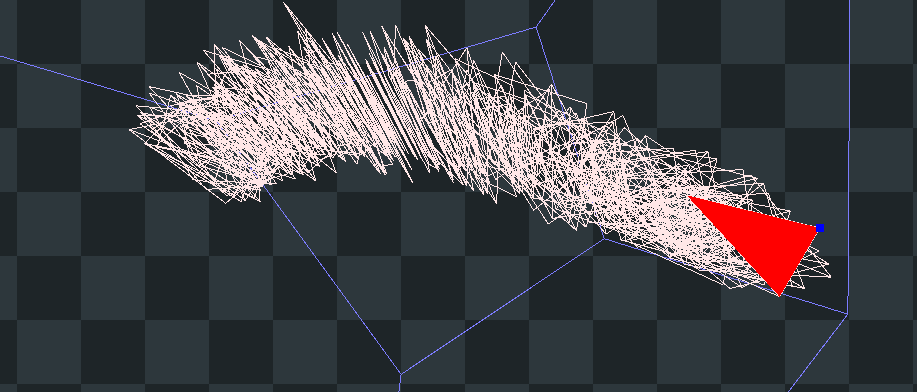

A vertex buffer contains the following mesh

which the vertex shader transforms in the fhe final terrain mesh for the current chunk.

Chunk size can vary based on distance and required resolution.

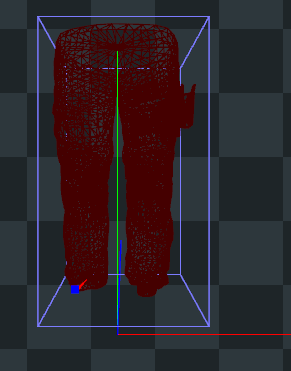

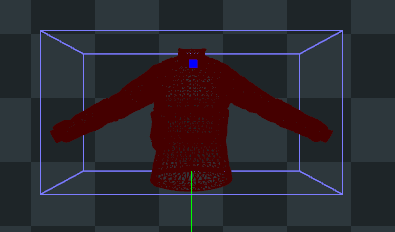

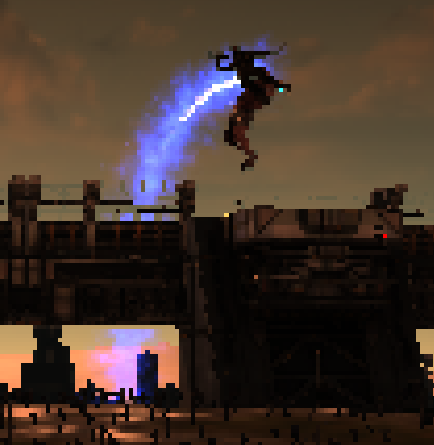

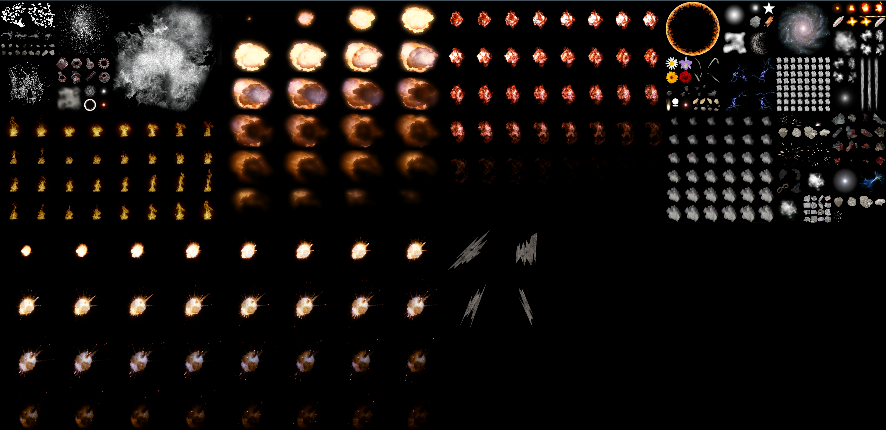

Legion system

Finally, let’s dig right in to the legion system. Closer enemies and NPCs are rendered normaly in several drawcalls:

Interestingly, the gun for this NPC has 58,341 triangles, despite only covering a couple tens of pixels. Next, for far away enemies an impostor mesh is generated, containing a quad for each enemy.

Their textures are stored in an atlas that seems to contain animations frames as well as various orientations.

In just one drawcall tens of thousand of distant enemies are drawn. The process is repeated for different types of enemies.

The vertex buffer is probably uploaded from the CPU at every frame.

Sky

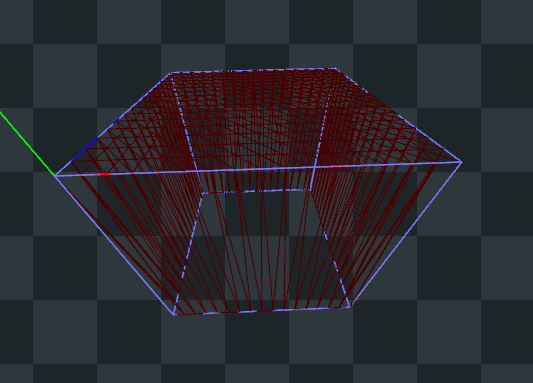

For the sky a cube is rendered in 6 separate drawcalls (one for each side):

The sky itself is stored in a 2D texture.

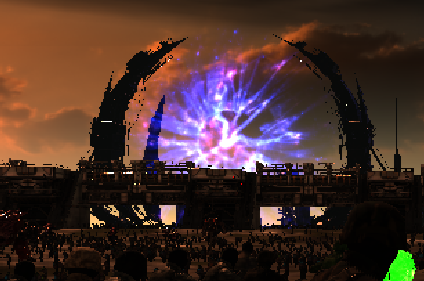

Portal

First a textured quad with alpha blending is rendered:

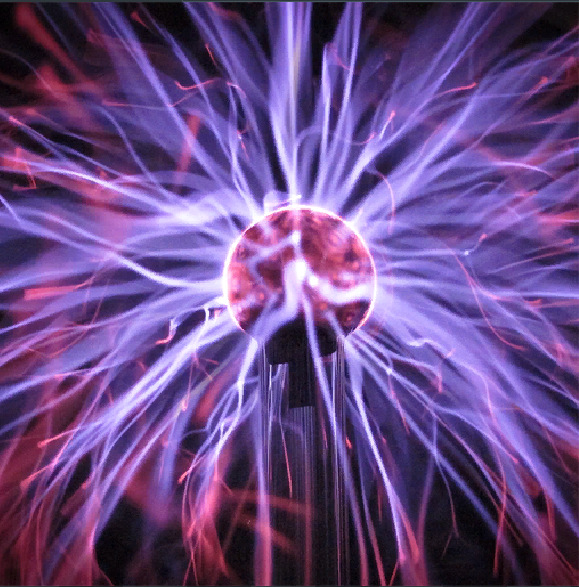

Then a bumpy circle mesh is rendered multiple times with different textures:

In 3 passes the portal effect is rendered:

This one does distorsion:

Particles

Particles are rendered in a single drawcall. I suppose the vertex buffers and particle simulations are done in the CPU.

Bloom

At this point, in order to create a bloom effect, the frame buffer is downcaled and upscaled multiple times. During the first upscale the dark parts of the buffer are rendered as black.

Various upscaling passes are omitted. At the end a 408×230 image is obtained.

Mixing and tone mapping

So far the image has been renderd into an HDR render target. This pass does tone mapping and mixes the result with the bloom buffer:

FXAA

Before FXAA:

After FXAA:

UI

At this point the UI is rendered on top of the LDR buffer, and we get the final frame:

Lighting

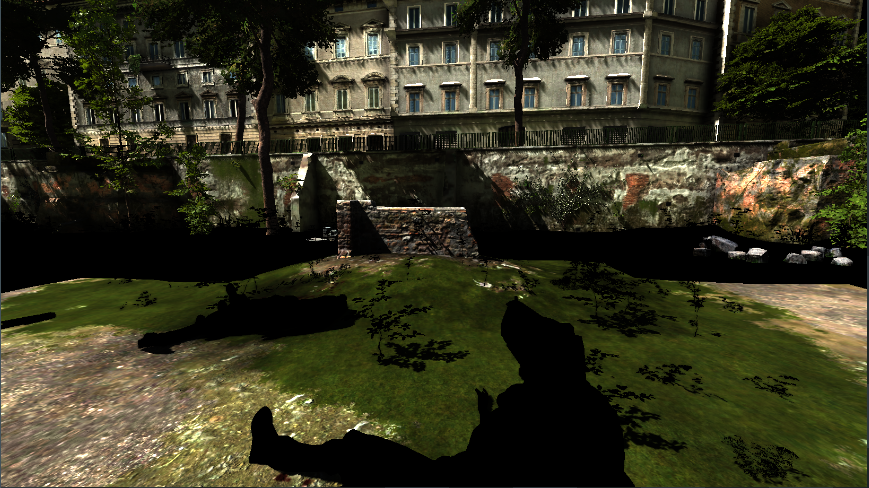

During the second pass the pixel shader calculates all of the lighting. Let’s see how it works by dissecting a different frame:

Terrain patch

To be precise we are going to look at the shader inputs for one of the terrain patches:

Some of the shader inputs are buffers or black images, but some have a much clearer purpose. This one looks like albedo:

Some kind of normal map:

One of the faces of a cubemap (used for specular reflections):

A texture that seems to contain a light map:

This is the lightmap for a different model:

Sun shadow map:

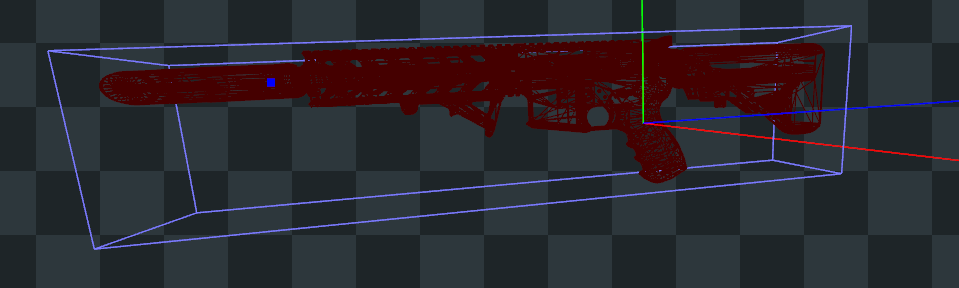

Gun

Let’s also see the shader inputs for the gun:

Cubemap face:

Normal map:

Albedo:

Not sure about this one, probably contains several material attributes in various channels:

Sun shadow map:

Smoke

The smoke is rendered as particles. They work like the ones we’ve seen before but those are lit. The shader has (among other things) those two images as input.

Conclusion

The game doesn’t make use of many compute shaders, instead many passes just render a quad to fill the entire screen. Some compute shaders are used at the very beginning for a purpose that I haven’t managed to figure out.

Overall the rendering is mainly done like in previous games of the series and has nothing special, but despite that

the overall look is pleasing to the eye.

Despite the simplicity of the rendering pipeline the game requires a pretty good PC to run properly.

(l’articolo in inglese pone il problema di come rispondere…. Tra l’altro non il top per indicizzazione su Google)

Lavoro interessante. Una cosa importante: quando fai queste robe, abituati a loggare e specificare quanto piu’ possibile del tuo setup, quindi: HW, OS e versione, driver e versione, e il top sarebbe anche salvare e condividere la trace di Renderdoc… La CG ha gia’ un grande problema di reproducibility ed e’ in atto uno sforzo collettivo per provare a risolvere la cosa anche (soprattutto) sul versante della ricerca, abituati ad andare sempre in questo senso.

Per il resto, non ho realmente letto tutto, pero’ mi ha fatto strano l’uso di FXAA, che immagino che tu abbia letto essere usato da qualche parte, o direttamente nelle opzioni del gioco, visto che con Renderdoc su binario attached non si puo’ vedere lo shader code, o mi sbaglio? (e’ parecchio che non uso RD 😃). Perche’ guardando il resto della pipeline, non vedeo nulla che realmente “obblighi” a fare AA in post-processing, tipo pipeline molto deferred, o decals etc. Sarebbe interessante vedere se la pipeline cambia selezionando MSAA (Google dice che si puo’ in quel gioco)…

Va benissimo se rispondi in italiano, ho scritto in inglese così da poter pubblicare l’articolo altrove e poter farlo leggere a chi non conosce l’inglese.

Il mio seup è un po complicato, ho usato una VM con passthrough della GPU. L’OS nella VM è windows 10, la versione del driver 20.9.1

L’host ha il seguente HW:

- CPU: I7 3770

- RAM: 16GB ddr3 1600Mhz

- GPU: RX580 8GB

Ho fatto svariati capture, alcuni in dx11, altri in dx12. Provando a fare capture di Vulkan RenderDoc crashava.

Usando dx11 lo shader che fa FXAA ha questo nome:

RenderDoc permette di mostrare l’assembly ed il DXIL dello shader.

Nei preset del gioco FXAA è sempre abilitato ma è possibile controllare MSAA ed FXAA indipendentemente nelle opzioni avanzate.

Nel post non ho parlato di SSAO, che è “nascosta” nel canale alpha di una tetxture RGBA con nulla negli altri canali.

Potrei fare un altro post on cui faccio un confronto con titoli precedenti (che si comportano in modo molto simile).

Part two

I did some more digging and found a few more things:

Explaining the colorfull buffer

I’m talking about his one to be precise:

After having a look at the shader’s assembly code it turns out it encodes depth. I actually suspected that but I wasn’t too sure. Still no clue on why it’s done this way.

SSAO

SSAO is stored in the alpha channel of an RGBA8 texture. The RGB part is white everywhere except for the sky which is black.

It is done by a pixel shader invocated on a screen filling quad. inputs to it are depth and a noise texture.

Somewhere it gets filtered and then mixed in in the second scene pass.

Weird point light

Point lights in this game are usually fairly limited but this one was doing a bit more than usual:

First it gets rendered into the light buffers just like any other lamp. The intensity of the light is varied according to a cube map, that’s why it seems like the barrel casts a shadow, in reality it does not. Earlier titles of the series had that too.

Shadows

Now, during the second pass on the scene, this model get’s rendered:

Yes, that’s a piece of geometry from the level…

A depth cube map is given as an input, one of the faces looks like this:

And this is what gets rendered in the hdr buffer:

Now that’s weird, why aren’t shadows calculated in the first light pass? Is the same shadow map applied to all lights?

Is the light buffer ignored for this model? does it substract the light contribution on shadowed parts? I don’t know but perhaps I might look into the shader and update this post.

Floor

You might have noticed that the floor hasn’t been rendered yet. Well not to worry because it’s goin to be rendered not once but three times now.

I can’t tell the difference between the first two passes, they both look like this:

(notice that contribution from other point lights is taken into account)

But on the third pass specular light from that light source is added

Volumetrics

At this point this geometry get’s drawn:

With this texture as an input to the pixel shader:

The result isn’t too bad:

Update

Thanks to a user who created a custom map for me I was able to better understand how the different types of point lights are handled.

There are mainly two types:

- Dynamic omni lights

- Fast dynamic omni lights

The custom map is divided in two rooms with each containing two lights per type

Fast omni lights

First they are rendered using the usual sphere

Normal maps aren’t taken into account

After that a fragment shader ran on a quad filters the ssao buffer and stores the result in the alpha channel of a texture that otherwise looks blue.

That alpha channel gets copied (using a fragment shader on a quad) to the alpha channel of the light buffer.

Scene pass

Now I’ve chosen a part of the scene geometry that is lit by all lights.

It is rendered two times, first the contribution of the fast lights plus one of the dynamic lights is written to the hdr buffer.

Notice the shadow in the back of the short hallway.

The alpha channel of the lights buffer is multiplied with the other channels.

Essentially this applies SSAO to the lighting.

It is then rendered once again in order to add the second dynamic light’s contribution

Notice there are two shadows now.

Normals are taken into account for dynamic lights

But since fast lights are calculated before the normal map is ever read from the material, it isn’t taken into account for them